In this guide

The complete guide to usability testing

Everything you need to know about usability testing questions

Usability testing has been around for a while now, and there are some best practices to consider when selecting the right questions for your study. A well-conducted usability testing session is crucial for identifying user experiences, behavioral insights, and functionality issues, providing valuable feedback for design improvements and user experience enhancement.

Let’s start by looking at the most common question types to build a foundation; then, we’ll give you actual questions you can use in your usability tests.

Definition and importance of usability testing

Usability testing is a method used to evaluate the usability of a product, service, or digital experience by observing users completing specific tasks and answering questions while interacting with it. This process helps to understand how easy it is for the target audience to use the product or service, identify usability problems, and collect both qualitative and quantitative data to determine the usability of the design.

Usability testing is essential for ensuring that the product or service is user-friendly, meets the target audience's needs, and reduces development costs and time by identifying usability problems early on. By focusing on specific tasks, you can pinpoint exactly where users struggle and make necessary adjustments to improve the user experience.

Benefits of usability testing for user experience

Usability testing provides numerous benefits for user experience, including:

- Identifying usability problems: By observing users, you can identify areas where they encounter difficulties, allowing you to make targeted improvements.

- Meeting user needs: Ensuring the product or service meets the target audience's needs is crucial for its success. Usability testing helps you understand these needs better.

- Reducing development costs and time: Identifying usability problems early on can save significant time and resources by preventing costly redesigns later in the development process.

- Building a user-targeted offering: Understanding the needs and expectations of your target audience allows you to create a product that resonates with them.

- Improving overall user experience: Gathering user feedback and iterating on the design based on this feedback leads to a more refined and user-friendly product.

By incorporating usability testing into your development process, you can create a product that meets and exceeds user expectations, leading to higher satisfaction and engagement.

The two types of usability testing questions

For getting feedback, there are two common question types. You’ll need to choose: open-ended vs closed-ended questions. While usability testing focuses on the effectiveness of a design, user testing assesses the necessity and utility of a product during early development stages.

Open-ended questions

Open-ended questions encourage free-form answers. They cannot be answered by “yes” or “no” responses. They start with words like “how,” “what,” “when,” “where,” “which,” and “who.”

TIP: Avoid “why” questions because they lead people to make up answers when they don't have a response. Instead, say something like, “please tell me more about that.”

When conducting qualitative usability research, you want to ask more open-ended questions because that's how you get human insight. Running small, moderated tests does not have statistical significance, so there's no point in getting answers that can be analyzed statistically. Instead, focus on digging deeper and getting richer data with open-ended questions.

Here are examples of open-ended questions:

- How does this product feature make you feel?

- What, if anything, do you want to change?

- How easy or difficult is this process? Please explain your answer.

Related reading: Ask the right questions in the right way

Closed-ended questions

Closed-ended questions have a set of definitive responses, such as “yes,” “no,” “A,” “B,” “C,” etc. These questions are great for unmoderated surveys or type box responses because your users don't have to respond as much and instead offer validation or lack thereof. These answers can be analyzed statistically, so they're better suited for quantitative research than qualitative.

Here are examples of closed-ended questions:

- Does this product feature make you feel empowered?

- Do you want to change anything about this?

- Is this process easy or difficult?

7 factors to consider before creating usability test questions

When determining what types of questions to ask during usability testing, it’s important to consider a few different factors to ensure the prompts will gather the necessary insights. User testing questions play a crucial role in understanding how users interact with software and in selecting the right participants who resemble the target audience to provide relevant and actionable feedback.

Some factors to consider include:

1. Research goals

“I start every project at the end – what do you need to solve for with this research? What decisions do you need to be able to make when it’s completed? It is critical that everyone involved is crystal clear on those things.” – Angie Amon, Mailchimp, UX Research Manager

To start, clarify the specific objectives of the usability testing. What do you hope to learn or achieve through the testing process? Aligning the questions with your team's research goals allows you to gather relevant and actionable insights.

2. User persona and context

Consider the characteristics of your target audience, such as:

- Demographics

- Experience level

- Goals

- Context in which they will interact with your product

Tailor the questions to address the intended user persona's needs, preferences, and behaviors.

3. Task scenarios

Structure the questions around specific task scenarios you expect users to perform during the testing session. Focus on tasks representative of typical user interactions and goals to glean insights into the usability of key features and functionalities.

4. Question types

Use a variety of question types to gather both qualitative and quantitative data. Open-ended questions encourage users to provide detailed feedback and insights. Closed-ended questions such as Likert scale ratings—which have users mark answers based on a scale with responses like “strongly agree,” “agree,” “neutral,” “disagree,” and “strongly disagree”—allow more straightforward response quantification and comparison.

Additionally, write usability testing questions by addressing common inquiries and pitfalls in their formulation to enhance usability testing outcomes.

5. Usability metrics

Consider incorporating standardized usability metrics, such as the System Usability Scale (SUS) or the Net Promoter Score (NPS), into your survey to assess overall usability and user satisfaction.

6. Sequence and flow

Organize the questions logically and sequentially to guide participants smoothly through the testing process. Start with broader, more general questions before delving into more specific usability aspects.

7. Bias

When conducting usability testing, consider potential biases influencing participants' responses. Avoid leading questions that may steer participants toward a particular response or influence their feedback. Instead, strive to ask neutral, unbiased questions that encourage honest and objective feedback from participants.

Usability test methods and approaches

There are various usability test methods and approaches that can be used to evaluate the usability of a product or service. Each method has its own strengths and can be chosen based on the specific needs of your project.

- Moderated usability testing: This involves observing users interacting with the product or service in person or remotely, with a moderator present to guide the test and ask questions. This method allows for real-time feedback and deeper insights into user behavior.

- Remote testing: In this approach, users interact with the product or service remotely, without a moderator present. This method is useful for reaching a broader audience and gathering diverse feedback.

- Unmoderated usability testing: Users are asked to complete specific tasks without observation or guidance. This method is efficient for gathering large amounts of data quickly and is often used for quantitative analysis.

- Website usability testing: This involves evaluating the usability of a website by observing users interacting with it. It helps identify navigation issues, content clarity, and overall user satisfaction.

- System usability scale (SUS) testing: Users rate the usability of a product or service on a scale of 1-5. This standardized tool provides a quick and reliable measure of usability.

- Single ease question (SEQ) testing: Users rate the ease or difficulty of a task on a scale of 1-7. This method helps identify specific pain points in the user journey.

When conducting usability testing, it’s essential to ask the right questions to gather valuable insights from users. This includes asking open-ended questions, avoiding leading questions, and using scales and multiple-choice questions to collect quantitative data. By using these methods and approaches, you can gather valuable user feedback and iterate on your design to create a better user experience.

The best questions to ask in your usability tests

Common usability testing questions take place in four phases:

- Screener questions: These usability test questions evaluate a participant's qualifications to eliminate participants who don't qualify.

- Pre-test usability questions: These give context to your participant's behavior and test answers so you can learn more about the participant before the test influences their answers.

- Usability test questions: In-test usability questions relate directly to your observations while users interact with your product.

- Follow-up usability test questions: These questions give you another chance to ask participants about their experience before the end of the usability study.

Screener question examples

Screener questions, or screeners, are questions intended to evaluate a participant's qualifications and target specific groups of participants. These multiple choice questions eliminate participants who don't qualify to participate in your study.

Screeners allow you to find participants based on their demographics or statistical data collected for a particular population by identifying variables and subgroups, like the following examples.

- How old are you?

- How do you describe your gender?

- What's your relationship status?

- What's your household income?

- How do you describe your ethnicity?

You can also filter participants based on psychographics, data that collects and categorizes the population by using characteristics like interests, activities, and opinions:

- How do you like to spend your free time?

- What's the last big ticket item you bought?

- Have you ever boycotted a brand? Please explain.

- How many hours a day do you spend on your phone?

To get started, identify the right target audience before creating screener questions, which ensures you get actionable feedback. For example, if most of your customers fall into a specific age range or geographic location, these might be the parameters you set. However, if you want to hear from those who may not be so familiar with your product for an unbiased outlook, think about enlisting users from outside the usual demographic.

The trick to effective screener questions is asking them in a way that identifies your audience without leading participants to a particular answer or revealing specific information about your test.

For example, if you're looking to test out a new mobile app intended for parents who live in the midwestern United States, you want to find participants who fit the criteria. Instead of asking someone if they live in the Midwest United States, you would ask in which regions you live and give answer choices for many different areas. Add distractor answers to your screener questions to deflect from the correct answer.

Here's how we would find our target audience of parents who live in the midwestern United States via screener questions:

- In which United States region do you currently reside?

- Northeast (Deny)

- Midwest (Accept)

- South (Deny)

- West (Deny)

- Alaska (Deny)

- Hawaii (Deny)

- None of the above (Deny)

- I do not live in the United States (Deny)

2. Which of the following best describes your current status:

- Married with children (Accept)

- Divorced with children (Accept)

- Never married with children (Accept)

- Married with no children (Deny)

- Divorced with no children (Deny)

- Never married with no children (Deny)

As you can see, we've woven the response we're looking for with distractor responses to increase our odds of getting the right participants without revealing details about the test or who we're looking for.

Pre-test usability question examples

Now that you've set up screener questions to find the ideal participants, it's time to ask questions to learn more about your participants before the test influences how they might answer. Pre-test usability questions give context to your participant's actions and test answers. They can be open or closed-ended questions.

For example, you might want to know how experienced your participant is with mobile apps before the usability study. This will help you better understand why they take specific actions.

Here are some examples of pre-test questions:

- Tell me about your current role

- Describe your family structure

- What work-related mobile apps do you use?

- How often do you perform a specific task?

- How familiar are you with…?

Usability test question examples

In-test usability questions are questions directly related to your testing objective. They should start general and get more specific. Always ask open-ended questions during your test.

Whether qualitative or quantitative, usability testing helps you understand the what, why, and how behind your customers and their actions. You can discover bugs or errors, get customer feedback, know your audience, learn whether something works as expected, and more.

Unmoderated usability test

When running an unmoderated usability test, you want to ensure your questions allow for open and honest feedback. Letting participants know when you're open to critical or negative feedback is also a good idea. After a participant finishes a task, here are some common open-ended questions to ask:

- What is your first impression of the task you just completed?

- What, if anything, did you like about the experience?

- What, if anything, did you not like about the experience?

- What, if anything, was unclear or confusing?

- Which of the two tasks did you prefer? Please explain your answer.

- What do you think about the process for [action]?

Moderated usability test

When running a moderated usability test, the moderator can probe deeper into the participant's responses. A good rule of thumb is to stay quiet and let the participant do most of the talking, but here are questions for promoting feedback:

- What's your opinion on how the information is laid out?

- I see that you [action]. Can you explain your thought process?

- Based on the previous task, how would you have preferred the information?

- You seemed to rush through the last step. What were you thinking?

Follow-up usability test questions

Follow-up usability test questions are a set of questions that end the sessions. These might include clarifying or probing questions.

After a usability test, you have another chance to ask participants about their experience for additional context. Get feedback on the experience overall and see if there's something they want to talk about that you didn't ask. Follow-up questions can be closed or open-ended.

- What was your overall impression of the experience?

- What, if anything, surprised you about the experience?

- If you could change anything about it, what would you change?

- How did the experience compare to past experiences?

- Is there anything we didn't ask you about this experience that we should have?

- What final comments do you want to make before ending this interview?

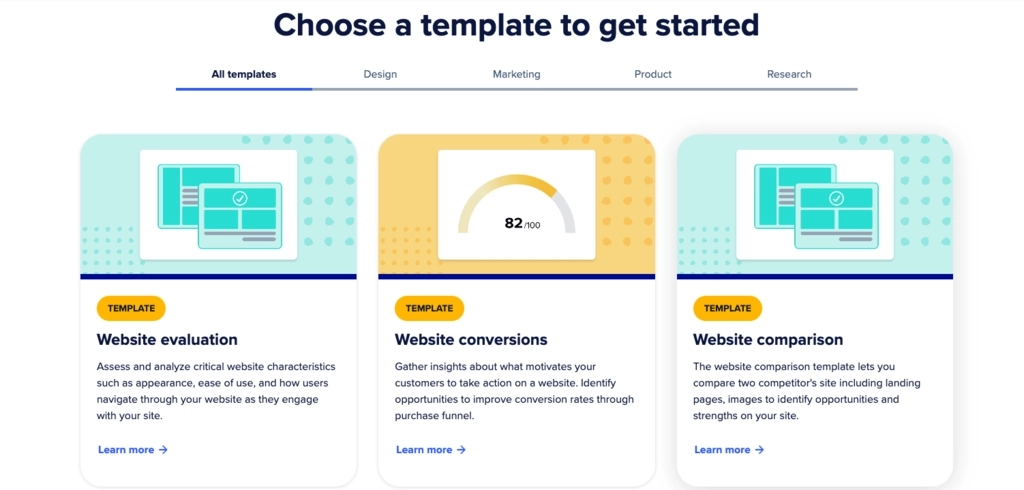

As you can see, no test is complete without usability questions. For inspiration, take a look at some more usability testing examples. Or, to start testing, browse the UserTesting templates gallery for inspiration for your next project. The gallery contains pre-built templates designed by research experts, which can be used as-is or customized to fit your needs.

Unlock your customer insight ROI

Discover the hidden ROI of your customers' insights. Book a demo today to learn more.